实验室重点研究普适计算环境中人机物之间自然高效的信息交换,人机异质智能协同等挑战性难题,创建理论方法、开放技术平台、建立创新高地。研究内容包括:

❖ 自然接口交互意图理解(输入法、穿戴交互、传感、普适健康监测)

❖ 交互路径优化(界面语义理解、AIoT情境感知、信息无障碍、自然交互操作系统)

❖ 人机混合智能增强(多模态信息的理解与生成、情感计算和数字虚拟人、机器人交互、人机协同决策)

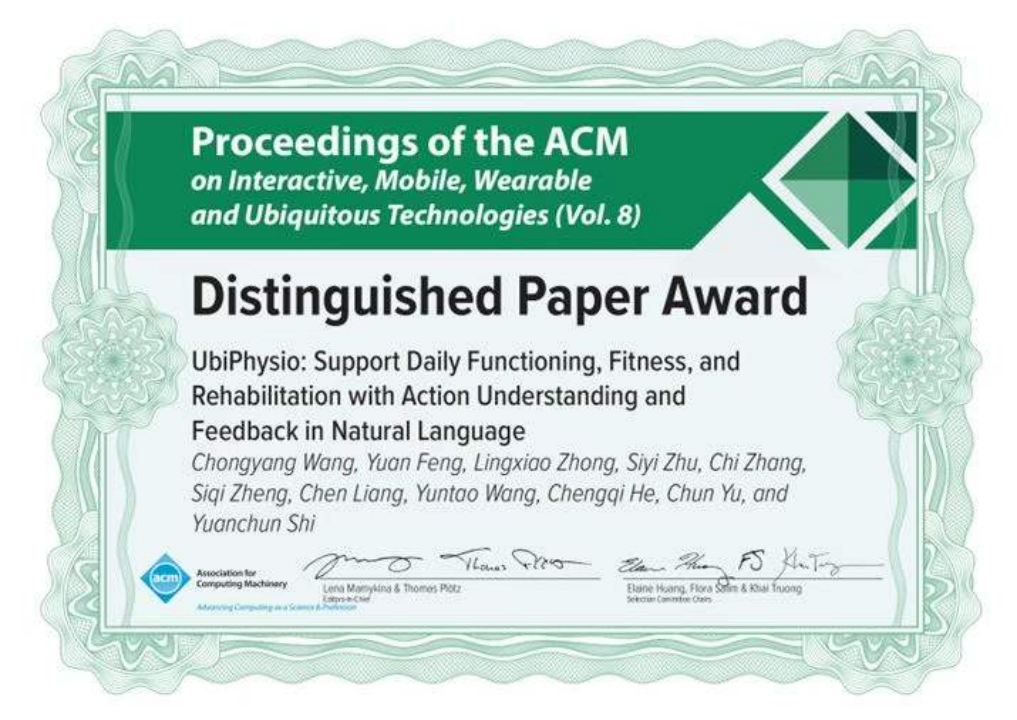

团队承担国家重点研发计划、自然科学基金重点等多项项目,同时与头部企业建立联合实验室,顶会论文成果近年(2016-)跃居CSRankings人机交互领域(团队主要成员发表数)世界第一,性能卓越的技术成果实用于七亿多智能终端上。实验室科研成果曾两次获得国家科技进步二等奖等奖项。

The lab focuses on challenging problems such as natural and efficient information exchange between human-computer and object, human-computer heterogeneous intelligent collaboration in the pervasive computing environment, creating theoretical methods, and building technology platform. The research includes:

❖ Interaction intention understanding (input method, wearable interaction, sensing, universal health monitoring).

❖Interaction path optimization (interface semantic understanding, AIoT situational awareness, information accessibility, natural interactive operating system).

❖Human-computer hybrid intelligence enhancement (multi-modal information understanding and generation, emotional computing and digital virtual human, robot interaction, human-computer collaborative decision-making).

The team has undertaken a number of projects such as national key R&D plan and key projects of Natural Science Foundation. At the same time, it has established joint laboratories with leading enterprises. In recent years (2016-) the number of papers in top conferences/venues of human-computer interaction has ranked the first in the world in the field of CS Rankings, and the technological achievements with excellent performance have been used on more than 700 million intelligent terminals. The scientific research achievements of the laboratory have won the second prize of the national scientific and technological progress award twice.